EFT and Amazon S3 storage

#14 Top Tip for Globalscape EFT ServerStats for 2023 show that AWS held 33% of the global market share of cloud infrastructure service for Q1, and research is showing around 60% of corporate data is stored in the cloud. This positive trend in cloud adoption is showing no sign of slowing down as it is estimated that 200 zettabytes of data will be stored in the cloud by 2025.

Globalscape understands the importance of cloud storage integration with their products, and therefore they offer the ability to utilise Amazon S3 storage with Globalscape EFT.

The combination of Globalscape EFT and AWS S3 Storage provides businesses with a powerful data management solution that enhances security, scalability, and efficiency. By leveraging EFT's secure file transfer capabilities and integrating with AWS S3's scalable storage, businesses can optimize their data workflows, ensure compliance, and take advantage of the vast AWS ecosystem.

To establish a connection between Globalscape EFT and AWS S3, you need to configure the appropriate settings in both systems. Within Globalscape EFT, set up an S3 connection profile by providing your AWS access credentials.

We recommend creating a “connection profile” that you can reuse in event rules, rather than defining external servers every time you create a new rule.

Steps To define a connection profile

- Right-click the “Connection Profiles”, then click “New Connection Profile”.

- In the “Connection Profile name” box, provide a name for the profile.

- In the “Description” box, provide a description for the profile.

- In the “Connection details > Protocol” area, select “Cloud storage connectors”.

- Specify the “Bucket name”, “S3 region”, “Authentication”, “Access key”, and “Secret key”, then “Proxy” and “Advanced” options if required.

- To verify the connection settings, click Test.

The connection profile will then be ready for you to utilise in your projects to transfer files utilising S3 buckets.EFT does not perform any sort of validation on the Bucket name created. Be aware of AWS restrictions when creating the name.

For more information regarding restrictions, limitations, and naming, refer to Creating object key names in the Amazon documentation.

Not on the latest version, here is the original blog:

This Top Tip shows you how to get started using EFT and Amazon S3 storage. You can reference Amazon S3 storage, RDS Databases and EC2 servers within the AWE engine in EFT Enterprise. The functionality is hidden but not disabled.

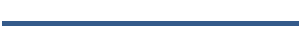

- Add in an S3 element to your AWE and then select ‘create session’ from the activity option.

- Add the access key pair, which is defined against the S3 bucket as a security authentication.

- You can leave all other options as default values.

Step 2: Write a file up to S3 storage

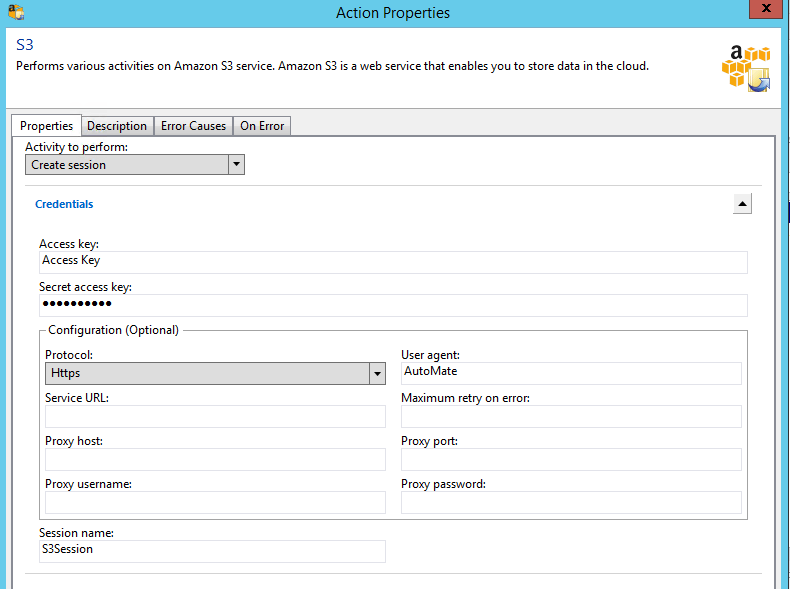

- For ‘activity’ select, ‘Put object’.

- The file to be written to S3 can use variables such as %FS_PATH% or a physical file path.

- The bucket name is the defined name of your S3 bucket.

- The Key Name is the name you wish the file to be called. Using the variable %FS_FILE_NAME% would apply the same file name defined in the FS_PATH variable. You could also build a variable to contain other strings such as dates.

- S3 does not have a file structure as such. You can create and reference folders inside it though, to make it act like a file system. To write a file to a folder, simply add the folder name at the start of the Key Name. Be aware that folders use a / character and that Key Names are case sensitive. So /mark/mark.txt is not the same file as /Mark/Mark.txt, and \Mark\Mark.txt is invalid.

- Please be aware that this does not handle Wildcards particularly well. If you want to put multiple files up to an S3 bucket, use a file loop and write each file one at a time. This will allow you to define each file name as it is uploaded. Almost every other setting on the ‘put object’ dialog box can be left

as default. Just make sure the S3 session created in the previous step is selected.

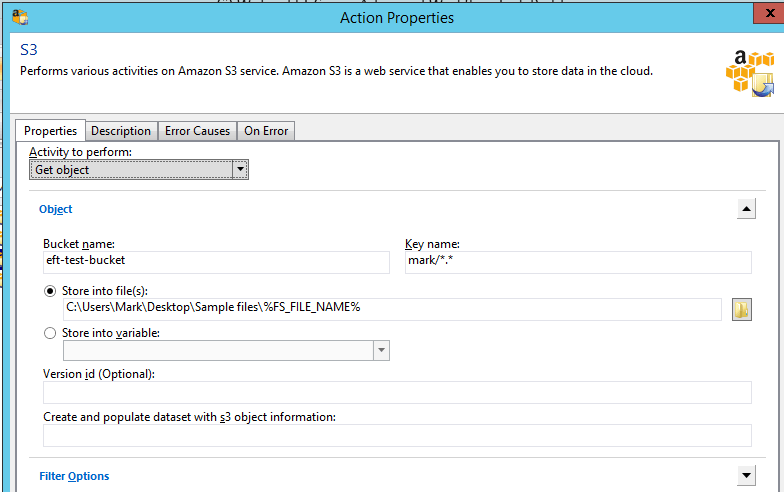

Step 3: Getting a file

- Pulling a file down from an S3 bucket is a similar process, but using the ‘Get object’ activity

- Take extra care with the file paths and file names, which are case sensitive.

- ‘Get objects’ can use Wildcards to define multiple files.

Once the AWE script has been built, you can call it from an Event rule in the normal way.

Sample

The AML for a sample ‘write and get’ are below. You will need to define the Access Keys and Secret Keys to get the script to work.

<AMAWSS3 ACTIVITY=”create_session” ACCESSKEY=”Access Key ” SECRETKEY=”AM2iAWndJRgKZS+BbZ012AQlL4Fu3T3YFuQaME” PROTOCOL=”https” />

<AMAWSS3 ACTIVITY=”put_object” BUCKETNAME=”eft-test-bucket” KEYNAME=”mark/*.zip” FILE=”C:\Users\Mark\Desktop\*.zip” />

<AMAWSS3 BUCKETNAME=”eft-test-bucket” KEYNAME=”mark/*.*” FILE=”C:\Users\Mark\Desktop\Sample files\%FS_FILE_NAME%” />

<AMAWSS3 ACTIVITY=”end_session” />

EFT Modules